Connected Audio-based Threat Detection on Raspberry Pi

2025-03-07 | By Maker.io Staff

License: See Original Project Single Board Computers Raspberry Pi SBC

Many smart devices can continuously monitor background noises and listen for threats like breaking glass or barking dogs. While this feature can be convenient, many users have privacy concerns and would rather not have corporations record and process every sound they make. This article investigates an alternative if you want to pair the convenience of automatic audio classification with more control over how your data is used and where it is processed. The project utilizes a Raspberry Pi to detect potentially dangerous sounds and communicate with other smart-home services to alert users.

Bill of Materials

This project utilizes the following components:

Qty Part

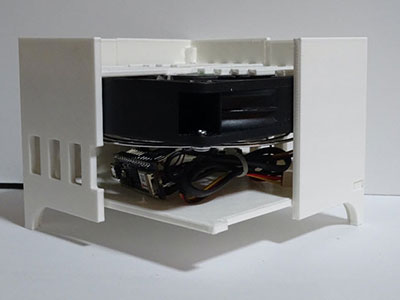

1 - Raspberry Pi 5

1 - Official Raspberry Pi 5 active cooling fan

1 - Official Raspberry Pi 5 power supply

1 - Compatible USB microphone

Defining the Problem

Before continuing, you should understand the basic machine learning process and the standard ML terminology. After covering the basics, the next step is to specify the problem the model should solve, and the data required to train it. This project targets specific audible threats with distinct and unique sounds:

Gunshots

Glass shatter sounds

Dog barks

Sirens

In theory, the system could detect numerous types. However, each additional category dramatically increases the required data and model training time. The resulting model may also become too complex, resulting in performance issues on embedded systems. Complicated models with too many labels are often more prone to errors or blurry boundaries between predicted classes. Therefore, focusing on a few common scenarios is a practical approach.

Finally, define evaluation metrics to assess the trained model's performance and the target accuracy you want to achieve. This project uses a confusion matrix with F1 scores. Usually, an accuracy of around 90% is desirable. Everything below might miss or misclassify too many critical events. Lower accuracy risks missing critical events, while overly accurate models may overfit, detecting only samples very similar to the training data.

Acquiring and Labeling Training Data

Machine learning requires a significant number of samples for training. This project utilizes supervised learning, so the data must also be labeled accordingly. You also need to provide negative samples to teach the system what it should not detect. In this instance, it should ignore regular background noises and non-threatening household sounds like clinging cutlery.

Pre-labeled datasets can be sourced from many platforms. For example, the Google AudioSet library provides annotated audio events with links to YouTube videos that include the samples and the appropriate timestamps. Kaggle is another popular source for ML datasets, and this project uses samples from this classifier dataset. For better specificity, the background should be recorded at home.

Connecting and Configuring a USB Microphone

This project uses a Raspberry Pi with a USB microphone to acquire accurate background noise samples for training and inference. Start by connecting the microphone, and then verify that the system detects the device using one of the following commands:

lsusb arecord -l

The system should detect the connected device without additional measures, as shown in the following screenshot:

Make sure that the Pi detects the USB microphone.

Building the Training and Test Data Sets on Edge Impulse

Start by creating a new free Edge Impulse account. Then, create a new project and navigate to its data page by using either the button on the dashboard or the side navigation bar:

Click one of the highlighted buttons to add data to an Edge Impulse project.

On the data page, click the plus button and then use the upload option to add existing clips:

Use the highlighted buttons to upload existing audio clips for model training and testing.

Following these steps opens the upload dialog, where you can specify how the service processes and labels the files. For this project, upload 100 samples from each category (e.g., barking, sirens). Select the option to automatically split the samples into training and test data for even distribution. Finally, ensure you enter the target label for each category:

Configure the data uploader, as shown in this screenshot. Don’t forget to set the correct label.

After repeating these steps for each label, the data panel should look similar to this:

Verify that the samples look correct and are labeled as expected.

Click through some of the samples and verify that they are labeled correctly. Also, double-check that the data were split into the training and test sets at approximately an 80-20 ratio.

Recording Samples Using the Raspberry Pi

Edge Impulse offers a companion application that lets the Raspberry Pi connect to the cloud platform to collect data and interact with the trained model. To get started, install at least version 20 of NodeJS – a JavaScript runtime environment – along with the required dependencies by typing:

sudo apt update curl -sL https://deb.nodesource.com/setup_20.x | sudo bash - sudo apt install -y gcc g++ make build-essential nodejs sox gstreamer1.0-tools gstreamer1.0-plugins-good gstreamer1.0-plugins-base gstreamer1.0-plugins-base-apps

Once the process finishes, install the Edge Impulse app with:

sudo npm install edge-impulse-linux -g --unsafe-perm

Finally, launch the application with the following command:

edge-impulse-linux --disable-camera

Deactivate the unneeded camera to prevent the program from throwing an error. When first launched, the app asks for your email address and password, and you’ll also have to select the microphone it should use for sampling:

This screenshot shows the initial configuration steps when first running the companion app.

The Raspberry Pi should now appear in the connected devices section of the website:

Verify that the Pi is connected to Edge Impulse.

Further, you can use the Pi to collect audio samples from the data acquisition tab:

Record background noise samples using the Pi and the USB microphone.

After setting the target label to “harmless,” you can use the sampling button to record five-second background noise samples. Make sure to collect around three minutes of sounds that usually occur naturally in your home. Keep the sounds as natural as possible. Do not exaggerate any of them, as the ML model will use these samples to learn what normal background noise sounds like in your dwelling. Don’t forget to transfer some harmless audio clips to the test set.

Preprocessing the Audio Samples

Building an ML model necessitates transforming the audio samples into a format the computer can understand. The samples all have slightly varying lengths, which makes them unsuitable for most neural networks. Neural networks — the ML model we want to build in this project — usually require fixed-length inputs. There are numerous ways to fulfill this requirement when dealing with data of different lengths. A typical approach involves padding shorter samples and splitting larger ones.

Since we don't have many samples, we can further increase the number of training samples by splitting each audio clip into shorter pieces. Overlapping the resulting pieces results in higher training accuracy since it preserves some of the coherence of the snippets. However, it's vital not to overdo the snipping to a point where the samples become too short to represent the target label.

Lastly, the data must be transformed into numeric information that the computer can understand. This project's preprocessing step converts each sample's audible information to the frequency domain using fast Fourier transformation (FFT). Plotting the frequencies of each sample point across the entire length of an audio snippet results in a spectrogram – a visual representation of the characteristic frequencies over time. The neural network uses a matrix representation of this information to learn characteristic patterns in the samples and associate them with the target labels.

Creating the Training Pipeline in Edge Impulse

Edge Impulse hides most of the complexity from its users, and all that's left to do is create an impulse with the preprocessing tasks and model description. Click the "create impulse" option in the side toolbar to get started.

The first block is already there, and it can't be changed. It represents the time-series audio data. To create overlapping windows, set the window size to 1000ms and the stride to 500ms. Check the zero-pad data checkbox to ensure shorter samples are padded to the target length.

Follow the highlighted steps to build the impulse.

Then, add an MFE processing block. This unit takes the uniform snippets, performs FFT, and outputs its characteristic spectrogram. Lastly, add a neural network classifier block and save the impulse.

Generating the Training Features

The next step requires extracting features from the samples for model training using the MFE block. Navigate to the MFE settings using the side toolbar and make sure that the “Parameters” tab is selected on the top of the MFE page:

This image illustrates how to extract training features from the audio samples.

This page lets you adjust multiple settings of the preprocessing step. You may need to change the filter number and FFT length parameters if no spectrogram is visible on the right. These spectra show the characteristic patterns of certain sounds, and you can use the controls in the top-right corner of the raw data panel to inspect different labels. When you’re done, use the button to save the parameters and navigate to the feature-generation tab at the top of the page:

This screenshot shows the feature extraction results.

Click the generate button to extract the features. The resulting 2D plot on the right-hand side of the page shows how well the features group the samples in each category into distinct clusters. In this instance, the separation is imperfect, and there is some overlap between the gunshot samples and barking. However, the samples cluster nicely for the most part, which should result in overall acceptable model accuracy.

Training the Machine Learning Model

Use the left sidebar again to navigate to the classifier settings and training page. The default settings will work fine for this project, and since the sample size is relatively small, training even 100 epochs should only take a short time. Use the button at the bottom of the page to save the settings and start the training process:

Follow the steps shown in this image to start training the neural network.

After training, the page shows the confusion matrix of all target labels together with the accuracy of all possible combinations and the F1-score of each target label:

Verify that the trained model performs sufficiently well for your use case.

As suspected, there is a significant overlap between the barking and gunshot sounds, and the model performs relatively poorly in differentiating the two classes. However, what is essential is that the model separates harmless sounds from any other harmful ones with an accuracy of almost 90%. That accuracy is sufficient for this application, especially given the small number of training samples. Introducing more (or better) samples, primarily gunshot sounds and clips of barking dogs, could increase the accuracy.

Testing, Tweaking, and Deploying the Model

After training, the model is ready to classify new audio samples recorded by the Raspberry Pi microphone. However, an optional step can help tweak the model's type-1 and type-2 error rates. Doing so adjusts whether the system is more prone to false or missed alarms. In this case, we prefer the system to be cautious: It should rather suspect something is happening than miss any potentially dangerous situations. Select the performance calibration option in the sidebar and then set the background noise label option to use the “harmless” label. Finally, click the green start button to run the automated test:

Configure the automated test, as shown in this image. Click the green button to start the testing process.

During this test, Edge Impulse generates new audio samples of realistic background noise and overlays them with various samples from the validation set. The framework then records how often the model’s predictions are accurate. After each run, it tweaks some of the model’s options, and it presents users with settings to choose from after testing concludes:

Select the tweaked settings that best match your expectations.

Note that this model performs rather poorly on average on unseen data. That is likely due to the reduced number of training samples and the fact that the training background noise was not very varied. Therefore, the system often mistakenly detects threats when tested with different background ambiance.

Select the point that minimizes the false rejection rate (the y-axis) and maximizes the false activation rate (the x-axis) to tweak the model to be overly cautious rather than too relaxed. Then, click the save button and navigate to the deployment tab using the sidebar.

On the model deployment page, select Linux (ARMv7) as the target platform for deployment on the Raspberry Pi. Then, select the quantized model to reduce the complexity, model size, and resource requirements for inference on embedded devices. Finally, click the build button. The resulting model is automatically downloaded to your computer so you can transfer the file to the Raspberry Pi.

Follow the steps outlined in this screenshot to build and deploy the model.

Alternatively, you can also download the model directly to the Pi using the companion app from before:

edge-impulse-linux-runner --download audio_detector_model.eim

Performing Inference Offline

After deploying and downloading the model, the Raspberry Pi can perform inference without an active Internet connection. Local inference reduces lag and ensures that audio samples are not uploaded to remote servers, giving users complete control over their data and privacy.

Edge Impulse offers multiple SDKs for high-level programming languages like Python and C++. The Python SDK requires Python 3.7 or newer, and it also needs some external libraries, which can be installed using the following command:

sudo apt install libatlas-base-dev python3-pyaudio portaudio19-dev

Then, use the Python package manager to install a few additional libraries:

pip3 install opencv-python

Once these processes finish, the SDK can be installed using pip:

pip3 install edge_impulse_linux -i https://pypi.python.org/simple

The following simple Python program uses the trained ML model to continuously record short samples using the first audio input device and perform inference on the snippet. It then prints the inference result to the console if the program detects a potentially threatening sound:

import os

from edge_impulse_linux.audio import AudioImpulseRunner

runner = None

device = 1

model = 'audio_detector_model.eim'

def label_detected(label_name, certainty):

print('Detected %s with certainty %.2f\n' % (label_name, certainty), end='')

# TODO: Perform other actions if required

def setup():

dir_path = os.path.dirname(os.path.realpath(__file__))

return os.path.join(dir_path, model)

def teardown():

if (runner):

runner.stop()

if name == '__main__':

model_file = setup()

with AudioImpulseRunner(model_file) as runner:

try:

model_info = runner.init()

labels = model_info['model_parameters']['labels']

for res, audio in runner.classifier(device_id=device):

for label in labels:

score = res['result']['classification'][label]

if score > 0.5:

label_detected(label, score)

except RuntimeError:

print('Error')

finally:

teardown()

The code starts by importing the Edge Impulse SDK. It contains a few variables that hold the inference runner object, the microphone ID, and the model file name.

The code defines some custom helper functions. The label_detected function outputs the prediction result and the certainty. However, it could perform additional actions, such as sending messages to an existing smart home setup. The setup function finds the Python script's parent folder and appends the model file name to build a full path. Lastly, the teardown method stops the model runner to release system resources when the app quits.

The main method calls the setup function on startup. It then creates a new AudioImpulseRunner object for performing inference locally. Within the try block, the application initializes the runner and loads all available labels. It then iterates all possible labels and checks whether the model detected any of them. If the model reports a detection with a certainty value over 50%, the script calls the label_detected helper with the label name and certainty.

Interfacing With Smart Home Platforms Using MQTT

This final section of the project discusses how to use MQTT — a popular communication protocol for exchanging data between IoT and smart-home devices — to publish the ML model’s predictions to an MQTT broker. This broker can then relay the messages to other smart home platforms like Apple HomeKit or Amazon Alexa. It’s recommended that you familiarize yourself with the basics of MQTT on the Raspberry Pi if all of this is new to you.

You can use an existing MQTT broker and publish messages directly to that one or set up a new one on the Raspberry Pi, for example, using Mosquitto. To set up a new broker, start by installing the following packages on the Pi:

sudo apt install mosquitto mosquitto-clients

The MQTT broker should automatically start up and be ready to accept clients and requests. Next, install the paho-mqtt library for Python by typing:

pip3 install paho-mqtt

This SDK lets the Python program publish messages to the MQTT broker whenever the ML model detects a dangerous situation. Start by adding the following import statement at the start of the Python script:

import paho.mqtt.client as mqtt

Next, define the following variables for the MQTT broker, the client, and the topic label:

client = None broker_address = 'localhost' broker_port = 1883 out_topic = "pi/threat_detected" threat_detected = "false"

Adjust the broker address if your project communicates with an external MQTT broker that is not running on the Pi. Afterward, expand the setup function by adding the MQTT client setup code so that it looks as follows:

def setup(): global client client = mqtt.Client() client.on_connect = mqtt_connected client.on_message = mqtt_message_received client.connect(broker_address, broker_port, 60) client.loop_start() dir_path = os.path.dirname(os.path.realpath(__file__)) return os.path.join(dir_path, model)

The updated code now additionally creates a client object and stores it in the global client variable. It also registers two callback functions to handle incoming MQTT requests. The event handlers look as follows:

def mqtt_connected(client, userdata, flags, rc):

client.subscribe("pi/reset")

client.publish(out_topic, threat_detected)

def mqtt_message_received(client, userdata, msg):

global threat_detected

if msg.topic == "pi/reset":

threat_detected = "false"

client.publish(out_topic, threat_detected)The mqtt_connected callback is called whenever a connection starts. It subscribes to the pi/reset topic to let users or other devices reset a previously triggered alarm. The function then publishes the initial trigger state. The mqtt_message_received helper function handles incoming messages. In this instance, it only resets the threat_detected flag and publishes the result.

The teardown method also needs to be adjusted to include a client disconnect call:

def teardown(): if (client): client.loop_stop() client.disconnect() if (runner): runner.stop()

Finally, you can publish messages to the MQTT broker by expanding the label_detected function like so:

def label_detected(label_name, certainty): global threat_detected threat_detected = "true" client.publish(out_topic, threat_detected)

The updated function sends the threat_detected flag and sends it to the MQTT broker using the publish method.

Relaying MQTT Messages to Apple HomeKit

I finished the project by relaying the MQTT messages sent to the broker on the Pi to my HomeKit environment. Doing so requires a mapping layer that lets the Pi interface with Apple’s infrastructure. To get started, add the Homebridge repository and its GPG key to the package manager:

curl -sSfL https://repo.homebridge.io/KEY.gpg | sudo gpg --dearmor | sudo tee /usr/share/keyrings/homebridge.gpg > /dev/null echo "deb [signed-by=/usr/share/keyrings/homebridge.gpg] https://repo.homebridge.io stable main" | sudo tee /etc/apt/sources.list.d/homebridge.list > /dev/null

Then, install Homebridge on the Raspberry Pi by typing the following commands:

sudo apt-get update sudo apt-get install homebridge

Once installed, the setup process can be finished by visiting the Homebridge UI using a web browser to navigate to http://raspberrypi.local:8581/. Homebridge needs a plugin to translate MQTT messages into control elements HomeKit can understand. Navigate to the plugins section in the web UI and install the homebridge-mqttthing plugin:

Install the plugin shown in this screenshot.

Once the process finishes, add the following accessories to the global JSON configuration data to the plugin to make it listen to the topic published by the Python program:

"accessories": [

{

"type": "leakSensor",

"name": "Potential Threat",

"url": "mqtt://localhost:1883",

"topics": {

"getLeakDetected": "pi/threat_detected"

},

"accessory": "mqttthing"

},

{

"type": "switch",

"name": "Reset Threat Detection",

"url": "mqtt://localhost:1883",

"topics": {

"getOn": "false",

"setOn": "pi/reset"

},

"accessory": "mqttthing"

}

],This snippet configures the mqttthing plugin installed earlier. It defines the MQTT broker address and gives the accessories recognizable names displayed in the HomeKit app. Next, it states which topics to use to obtain the threat detection flag and reset the alarm. After restarting the Homebridge service, the accessories page should show two new widgets, and, when triggered, the Potential Thread accessory should indicate a detection:

After I added the device to HomeKit and played a dog barking sound on my phone, the app showed that the model detected a potential threat.

Summary

Developing an ML model starts with acquiring suitable training data and labels if necessary. Training data sets are available from many sources, but augmenting the data with self-collected samples helps build more specific models.

Using Edge Impulse, training a complex model is as easy as uploading the data, defining a new impulse, and adding processing and classification blocks. This project uses FFT to translate audible information into a format a neural network can understand before training the model. The resulting model is then fine-tuned by selecting a configuration that favors false alarms over missed alarms.

The Edge Impulse Python SDK enables offline inference to protect users' privacy. The predicted labels are then broadcast to other parts of a home automation system using MQTT and Homebridge.